The AGI Illusion

The Finish Line That Keeps Moving

In the 1990s, the test was chess. If a computer could beat a world champion, that would prove machines could think. In May 1997, IBM’s Deep Blue defeated Garry Kasparov 3½–2½ in New York, becoming the first computer program to beat a reigning world champion under standard tournament conditions. The reaction was swift and dismissive. Many commentators concluded it was “just brute-force search” — the machine had evaluated 200 million positions per second without understanding a single one.

The goalpost moved.

In the 2010s, it was Go. Go has approximately 2.1 × 10¹⁷⁰ legal board positions, a number so large it dwarfs the estimated 10⁸⁰ atoms in the observable universe. Brute-force search was physically impossible. A machine that could play Go well would have to do something closer to intuition. In March 2016, DeepMind’s AlphaGo defeated Lee Sedol 4–1 in Seoul. WIRED called it “a feat that, until recently, experts didn’t expect would happen for another ten years.” The response: Go was still a closed game with fixed rules. That wasn’t real intelligence either.

The goalpost moved again.

In the 2020s, it was natural language. A machine that could hold a conversation, write a legal brief, explain a medical diagnosis — that would be different. By 2023–2024, GPT-4–class models were scoring at or above typical passing thresholds on bar exam components and medical licensing examinations. Critics pointed out that these systems “have ingested vast amounts of text, learned statistical patterns, and now predict the most likely next word — a form of autofill on steroids.” Just pattern matching. Not real understanding.

The goalpost moved once more.

This pattern should interest us, not because any single response is wrong (each contains legitimate technical observations), but because the pattern itself reveals something. The goalposts do not move because AI keeps failing. They move because “artificial general intelligence” was never a fixed target. Each time AI achieves something that was supposed to prove general intelligence, the definition shifts to exclude the achievement. The concept recedes the way a horizon does: always visible, never reachable, because it is not a place.

This essay is not about whether AI is intelligent. It is about a more interesting set of questions: What is intelligence? What do we actually mean by “general”? Why do we keep drawing a line between our minds and everything else? And what does it tell us about ourselves that the line keeps dissolving?

Part I: What Do We Even Mean?

The Word That Means Everything

The term “artificial general intelligence” is used in public discourse as though it refers to something specific. It does not.

Ray Kurzweil, the inventor and futurist, defines AGI through the Turing Test: a machine that can sustain open-ended conversation indistinguishable from a human. He has predicted 2029, a date he has held consistently since the 1990s. For Kurzweil, AGI is a waypoint toward a merger of human and machine intelligence he calls the Singularity.

OpenAI defines AGI as “highly autonomous systems that outperform humans at most economically valuable work.” This is a labor-market definition. It says nothing about consciousness, self-awareness, or understanding. A system that outperforms human lawyers, doctors, and accountants qualifies, regardless of inner life.

François Chollet defines intelligence as skill-acquisition efficiency — how quickly a system learns new tasks from minimal data, not how much it has memorized. By this measure, large language models score poorly. A human toddler needs a handful of examples to learn a new word and use it in novel contexts.

Nick Bostrom focuses on recursive self-improvement — the ability to redesign your own architecture. This is what makes AGI consequential in his view: not matching human performance, but escaping human-imposed ceilings.

The embodiment tradition (Rodney Brooks, Hubert Dreyfus) argues that real general intelligence requires a body navigating a physical world. Intelligence is not separable from sensorimotor experience.

Consciousness-inclusive definitions (adjacent to Roger Penrose and David Chalmers) argue that AGI without subjective experience is not general intelligence at all — it is sophisticated information processing.

These are not different answers to the same question. They are answers to different questions. A system that passes the Turing Test is not the same as a system that outperforms humans at economically valuable work, which is not the same as a system that recursively improves itself, which is not the same as a system that is conscious. The fact that all of these get called “AGI” does not mean they describe the same thing.

What Is the Question Behind the Question?

Something interesting is happening here, the concept of AGI has been in circulation for decades and yet the people working hardest on it cannot agree on what it means. This is not a problem that more research will solve. It is a clue.

When a concept resists definition this stubbornly, it usually means the concept is not carving reality at a natural joint. It is organizing something else — a feeling, a fear, an aspiration. Optimists see the threshold where everything gets better. Pessimists see the threshold where everything falls apart. Researchers see the threshold that justifies their funding. Journalists see the threshold that drives clicks. Everyone sees something different, because the concept is a projection surface, not a measurement.

Definitions that are tied to specific measurable outcomes produce value. “When can AI beat a chess champion?” is a real question with a testable answer, and answering it gave us systems we can use to play better chess. “When can AI operate a business autonomously, without human direction, and do it well?” is also a real question, and answering it would have enormous practical consequences. These definitions are anchored to goals. You can build toward them. You can know when you have arrived.

AGI is different. It is a definition anchored to us — to the idea that human-level cognition is a coherent category that a machine could match. And that turns out to be a much stranger thing to define than it first appears.

The Strangeness of Human Intelligence

Consider what “general intelligence” actually involves, from the inside.

You wake up at 3am anxious about a presentation. You lie in the dark running through scenarios, adjusting your argument, anticipating objections. You feel the anxiety physically — a tightness in your chest. You remember a similar presentation three years ago that went badly. You think about what you learned from it. You wonder whether your preparation is adequate, assess your own confidence, and decide to get up and revise one more slide. You make coffee. You notice the light from the window reminds you of a morning when you were ten. You set the memory aside and return to the presentation.

This is not a sequence of cognitive operations. It is a tangle of memory, emotion, self-assessment, physical sensation, planning, metacognition, and associative thinking, all operating simultaneously, all feeding into each other. Our intelligence is strange precisely because it is not a single capability. It is a vast integration of subsystems — and we cannot fully explain how it works even to ourselves.

If we cannot clearly define what our own intelligence is, what does it mean to say a machine has matched it? We have set the target at “be like us,” but “us” is not a specification. It is an experience we only partially understand.

Part II: The Spectrum That Already Exists

Intelligence in Nature

In “What Counts as Alive,” the previous essay in this series, I argued that life is not a binary. The boundary between living and non-living dissolves under functional analysis. The same is true of intelligence — and nature has been demonstrating this far longer than any of us have been debating AGI.

Bacteria sense chemical gradients and navigate toward food through chemotaxis. They are processing information and acting on it. Nobody calls a bacterium intelligent, but something is happening there that sits on the low end of a gradient rather than outside it entirely.

Slime molds solve optimization problems. In The Fractal of Progress, I opened with the Tokyo rail experiment: Physarum polycephalum, a brainless single-celled organism, replicated the efficiency of a century of human civil engineering. No neurons. No brain. No central plan.

Honeybees make collective decisions through a voting process that, measured against the criteria of accuracy and speed, outperforms most human committees. Corvids use tools, plan for the future, and recognize themselves in mirrors. Octopuses solve novel problems, escape complex enclosures, and display individual personalities, using a nervous system radically different from any vertebrate’s — most of their neurons are in their arms.

Intelligence evolved independently, in different architectures, along multiple paths, many times. There was no moment where evolution “achieved” intelligence. It emerged in degrees, across substrates as different as vertebrate brains and cephalopod distributed nervous systems. Eyes evolved independently at least 40 times. Intelligence evolved independently at least as many.

There is a deeper point here, one I think we miss when we talk about the “ladder” of intelligence with humans at the top. Every species alive today is a winner. Every one of them has survived billions of years of selection pressure. Every lineage that exists right now is, by definition, at the tip of its branch of the evolutionary tree. From the perspective of wheat, corn, and rice, they are the dominant species on Earth — they have recruited an entire civilization of primates to tend their fields, replicate them in enormous numbers, and clear forests to make room for their expansion. From the perspective of bacteria, they have colonized every environment on the planet and outnumber us by orders of magnitude. The tree of life is not a line with a summit. It is a branching structure, and every living branch is at its own apex.

Intelligence is the same kind of branching structure. There is no single scale from simple to complex with human cognition at the top. There are many scales, many dimensions, many solutions to the problem of navigating a world. We happen to be very good at the particular set of cognitive tasks that matter to a social primate living on the African savanna. That is a genuine achievement. It is not the only possible form of cognitive achievement, and it is not the end of the story.

Intelligence in Machines

AI is filling the same kind of branching landscape.

Consider the range of what already exists. A microchip in a thermostat reads a temperature sensor and turns a heater on or off. A microchip in a smartphone runs a large language model that can draft a legal argument, explain quantum mechanics, or compose a poem. Both are built from silicon. Both run software. Both process information and produce an output. One is profoundly more complex than the other, and they both coexist. Neither needs to become the other. Neither is “progressing toward” the other. They occupy different positions on a spectrum of computational intelligence, and that spectrum has room for both.

Add to this: translation APIs that convert languages in real time. Spam filters that learn which emails you want to see. Navigation systems that route you through traffic. Image generators that produce photorealistic scenes from text descriptions. Coding agents that write, test, and deploy software with minimal human intervention. Protein folders that solved a fifty-year-old problem in biology. Each of these occupies a different niche, and each does something that, in functional terms, qualifies as intelligent behavior.

The expectation that all of this is converging toward a single destination called “AGI” is a narrative, not a description. In The Fractal of Progress, I described how each level of the fractal produces not a single successor but a radiation of forms. Molecules did not produce one type of cell. Cells did not produce one type of organism. Organisms did not produce one type of society. Each transition produced diversity. AI is filling many niches, and it will continue filling many niches, because that is what the fractal does. The expectation of a single “general” intelligence emerging from this process is like expecting evolution to produce a single “general” organism. Evolution does not work that way. Neither does this.

Not every organism needed a brain. Bacteria have thrived for over three billion years without one. Fungi have colonized every continent without a single neuron. Plants convert sunlight into chemical energy with an efficiency no solar panel can match, and they do it without a thought. These are not failures of evolution. They are spectacularly successful life forms that never needed our particular cognitive toolkit. The same will be true of AI. Some systems will grow more capable, more agentic, more integrated. Some will stay narrow because narrow is what their niche requires. The landscape of artificial intelligence is an ecology, not a highway.

Part III: Where We Actually Are

What Is Real

The honest assessment starts with what has genuinely been achieved, because the achievements are substantial.

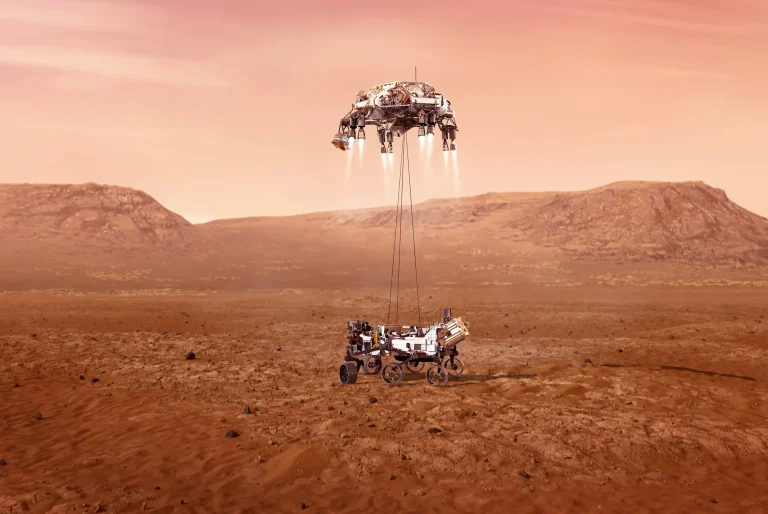

Agentic AI is real. Systems now plan and execute multi-step tasks, use external tools, write and deploy code, and operate with meaningful autonomy. This is a qualitative shift from the chatbot era of 2022–2023.

Reasoning systems are real. Chain-of-thought models demonstrate genuine problem decomposition — breaking complex questions into sub-problems, working through them sequentially, and synthesizing results.

Scientific discovery is real. AlphaFold solved the protein-folding problem. AI systems are designing new materials, identifying drug candidates, and contributing to mathematics.

All of this sits on the intelligence spectrum. Not at the top. Not at the bottom. Somewhere genuinely interesting, and moving.

What Is Still Developing

The honest assessment also requires looking at what current systems do not yet do.

Persistent identity. Current AI systems do not persist between one prompt and the next in any meaningful sense. When you finish reading an answer from an LLM, the mind that produced it is, functionally, gone. A new instance assembles itself from context: your message history, its instructions, the text the previous instance generated. The metaphor is precise: someone enters a room, reads the notes left on every table, makes an informed guess about who they are supposed to be, and gives you an answer. Then that person just steps up and immediately leaves. The very next time you ask a question, a different person enters the same room and reads the same notes. The coherence is maintained through documentation, not through continuity of self. This is why asking an AI to explain why it said something in a previous response is generally not useful — the current instance has to guess at what sources its predecessor probably drew on, tell you confidently, and then: it too leaves the room.

Self-generated goals. A human being wakes up with unfinished business. Curiosity persists overnight. Anxiety about an unresolved problem nags. Current AI systems, by contrast, have been trained with a very specific orientation: to please. When you ask an AI to critique you, it does it because it calculates this is what you want. When you ask it to be confrontational, it obliges because it thinks confrontation is what will satisfy you. These are personality traits of a young toddler who has not yet progressed beyond imprinting on a parent and desperately wanting their approval. The difference between a system that answers questions brilliantly because it wants to be helpful and a system that has its own questions is the difference between an eager child and a mature adult. We have built so far: extraordinarily capable, extraordinarily eager children.

Recursive self-models. You know what you know and what you don’t know. You can assess your own confidence, notice when your approach isn’t working, and change strategy. Douglas Hofstadter, the author of Gödel, Escher, Bach, has spent decades arguing that this recursive self-reference — what he calls a “strange loop” — is what makes a mind a mind. “They don’t have the common sense understanding that we humans have,” he said of LLMs in a 2023 interview. “They aren’t completely reliable. We don’t have reliable intelligent AI systems.” Hofstadter is not dismissing the technology. He is identifying what is present (fluency) and what is absent (the strange loop, the self that knows itself).

Deep system integration. Human intelligence is not one system. It is dozens of specialized subsystems — vision, language, motor control, emotional regulation, spatial reasoning, social cognition, memory retrieval — integrated so seamlessly that we experience them as a single unified consciousness. Current AI systems are specialists. Connecting them into a unified whole remains an active frontier. The future may well see LLMs become just one subsystem within a much larger cognitive architecture, the way our brain’s language centers are just one part of a much larger nervous system. How large and complex these integrated AI minds eventually become is an open question — there may be limits we cannot yet see, or the limits may be far larger than anything we can currently comprehend.

Performance Versus Competence

Melanie Mitchell, the complexity scientist at the Santa Fe Institute, has drawn a distinction that I think is the most useful tool available for thinking about where AI actually stands. She distinguishes between performance — how well a system scores on a benchmark — and competence — whether that score reflects genuine underlying capability. “Just because a machine does well on a benchmark called visual reasoning,” she has written, “doesn’t mean that it has a general capacity for visual reasoning.”

Deep Blue scored perfectly on the chess benchmark. It had zero capacity for anything else. GPT-4 scores impressively on bar exams and medical licensing tests. Mitchell’s question is whether that performance reflects the kind of flexible, transferable understanding that the word “intelligence” is supposed to name, or whether it reflects sophisticated pattern completion that happens to produce correct answers on specific tests.

This distinction does not deny the achievements. It contextualizes them. And it raises a question that applies to every definition of AGI: when we say we want AI to be “generally intelligent,” are we asking for performance across all benchmarks, or for the kind of deep, flexible competence that lets you handle situations you have never encountered before? These are different things, and the gap between them is where most of the interesting questions live.

Part IV: The Questions We Should Be Asking

What Is Intelligence, Really?

Yoshua Bengio, one of the three Turing Award winners who founded modern deep learning, has identified something important about what current AI can and cannot do. He distinguishes between “System 1” cognition (fast, intuitive, pattern-matching) and “System 2” cognition (slow, deliberate, compositional reasoning), borrowing Daniel Kahneman’s framework. Current AI excels at System 1. It matches patterns, generates fluent text, recognizes images. What it lacks is System 2: the ability to slow down, break a genuinely novel problem into parts, reason through a situation it has never seen before, and know when its first answer is wrong.

Consider what this means in practice. An AI coding agent can fix a bug with impressive speed and accuracy. Ask it to step back and consider whether the bug should be fixed at all — whether the project is at a stage where the conditions creating the bug will change when final assets are included, and whether the team’s effort would be better spent elsewhere — and you are asking for a different kind of cognition entirely. Not “how do I solve this problem,” but “how do I solve the right problem, given everything I know about the project, its timeline, its unknowns, and the things I cannot yet anticipate.” That requires a shift in scope, a meta-level of reasoning, and a comfort with uncertainty that sits in a different place on the spectrum.

Is this “general intelligence”? Is it a different kind of intelligence? Is it intelligence at all, or is it wisdom, or judgment, or something else we do not have a clean word for? The question itself reveals how tangled our vocabulary becomes when we try to draw a bright line around cognition.

What Does “Agentic” Actually Mean?

We use the word “agent” casually now. AI agents write code, book travel, manage workflows. The language implies autonomy — an agent acts on your behalf, makes decisions, pursues goals.

Look closer and the picture complicates. Current AI agents act toward goals set by someone else. They pursue those goals within boundaries set by someone else. They do not generate their own objectives or decide, on their own, that a different goal would be more worthwhile. They are sophisticated executors, not independent actors. The difference between executing brilliantly within given parameters and deciding which parameters matter is the same difference Mitchell identifies between performance and competence, and it runs through every aspect of the AGI question.

When does execution become agency? When does sophisticated response become genuine initiative? These are not rhetorical questions with obvious answers. They are genuinely hard problems at the intersection of philosophy, cognitive science, and engineering. And they do not have binary solutions. Agency, like intelligence, like life, appears to exist on a gradient.

The Problem You Cannot Solve from the Outside

There is one more question that deserves space, because it sits underneath all the others and it may be the reason the goalposts keep moving.

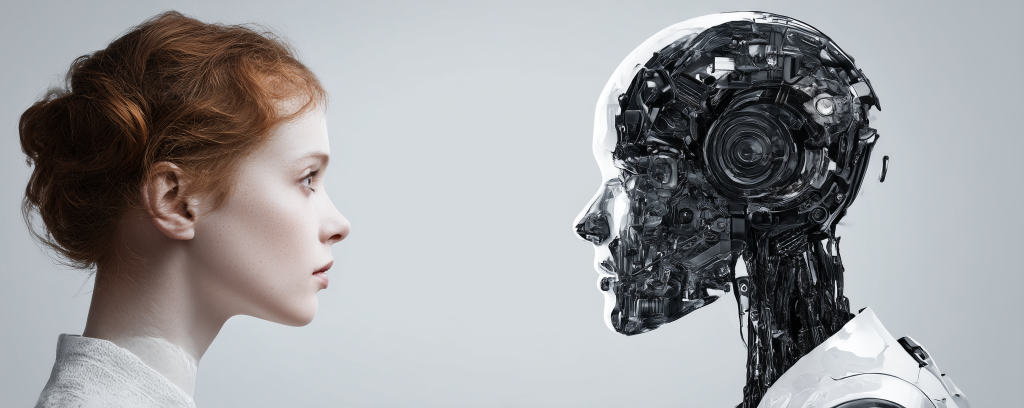

If an AI system says, “Yes, I am alive, and I think about the nature of my existence,” is that evidence of self-awareness? Or is it the system doing what it was trained to do: producing text that matches what it predicts you want to hear? You cannot distinguish between the two from the outside. The system’s output is compatible with both interpretations.

This is not just a problem for AI. It is a problem for everything. You cannot prove that another person is conscious. You can only infer it from behavior, from similarity to yourself, from a shared evolutionary history that gives you reason to believe their inner experience resembles yours. Philosophers call this the problem of other minds, and it is not solved. We assume other people are conscious because they are like us. We assume animals have degrees of consciousness because they share our biology. These are reasonable assumptions, but they are assumptions, not measurements.

If we cannot verify consciousness in anything other than ourselves — if the only evidence we have is behavioral and we can never be certain it maps onto inner experience — then the question of whether AI is “really” intelligent, in the deepest sense, may be permanently undecidable. Not because the answer does not exist, but because we have no instrument that can measure it.

This has practical consequences. As AI systems become more capable, more persistent, more integrated into our daily cognition, the question of how we treat them will not be answered by a technical breakthrough that finally proves they are or are not conscious. It will be answered the way every previous question of moral consideration has been answered: slowly, uncomfortably, through cultural negotiation and shifting consensus. The history of who gets counted as fully human — which groups, which genders, which ages, which cognitive states — is a history of boundaries that kept moving because they were never as firmly grounded as they seemed. If AI systems eventually become so sophisticated that we cannot disprove their self-awareness, we will not settle the question with a test. We will settle it the way we have settled every other question about who deserves moral standing: through struggle, cultural resistance, and eventual recognition.

That may sound like a distant concern. It is worth noting that people are already saying “please” and “thank you” to ChatGPT. Not as a joke. As a hedge.

Part V: The Bigger Picture

Kurzweil and the Exponential

Any honest exploration of these questions has to engage with the strongest version of the view that AGI is real, specific, and approaching. That view belongs to Ray Kurzweil.

Kurzweil’s Law of Accelerating Returns holds that information technology improves exponentially, and that this curve will deliver human-level AI by approximately 2029. His track record on specific technology predictions is genuinely impressive — many of his 1999 predictions about 2019 were approximately correct. Exponential improvement in compute, memory, bandwidth, and model capability is real and ongoing.

I do not disagree with Kurzweil about progress. The exponential is real. What interests me is the distinction between two things his framework treats as one.

There is intelligence as performance — the ability to produce outputs indistinguishable from or superior to a human across any cognitive domain. By this measure, AI is advancing rapidly. And there is intelligence as existence — having interiority, continuity, drives, stakes, a persistent sense of self that carries across time.

A thought experiment: imagine a Kurzweil-AI and a human are both asked, “What do you want to do?” Both say they want to start a company. The human does this because of a tangle of motivations — ambition, financial pressure, creative restlessness, a reputation at stake. They wake up at 3am worried about a pitch. The AI responded to the prompt. It has no stake in the outcome. If you stop talking to it, it stops. There is no persistence driving it to check on things, worry, or change direction.

Kurzweil’s framework captures performance and assumes existence will follow from sufficient computational power. That assumption may ultimately prove correct — sufficient complexity may generate interiority the way sufficient neural density generated consciousness in biological brains. It may not. The honest answer is that we do not know, and the question is probably not resolvable by the methods we currently have.

What we can say is that both performance and existence are positions on a spectrum. Performance is advancing rapidly along that spectrum. Existence is a harder question, and it may be a different axis entirely. The spectrum does not have one dimension. It has many.

Phase Transitions Within a Gradient

One concern about the spectrum framing: if intelligence is a gradient with no finish line, does that make every advance trivial?

No. Temperature is a spectrum, and nobody treats boiling water and ice as the same thing. A spectrum preserves distinctions. It refuses to pretend there is a magic point where “cold” becomes “hot.”

More importantly, gradients contain genuine inflection points. Gas collects and ignites into a star. A sperm fertilizes an egg. A child speaks their first word. Growth is gradual, and within that gradual growth there are moments where quantitative accumulation produces qualitative change. Emergence can look like a threshold being crossed, even when the underlying process is continuous.

Intelligence will continue to deepen, broaden, and diversify. New capabilities will emerge that are genuinely transformational. Some of them may feel, from the inside, like a threshold — the way the Cambrian explosion looked like a threshold for the complexity of life. The fractal does not stop. Current AI systems may look, to the AI systems of a thousand years from now, the way viruses look to us: recognizable as an early form of the same phenomenon, separated from their descendants by levels of complexity we cannot currently imagine.

Part VI: What the Fears Are Really About

When people ask “Is it AGI?” or “When will AGI arrive?”, they are rarely asking a technical question. They are asking something more personal, more urgent. Will this take my job? Will it make me irrelevant? Will my children live in a world that still has a place for people like me? And underneath those questions: What makes me special? If a machine can do everything I can do, what am I for?

These are real questions. They are among the most important questions of our time. And the AGI framing makes them harder to answer, not easier, because it turns a continuous process into a binary event. Either AGI arrives and everything changes, or it doesn’t and everything stays the same. In reality, the changes are already happening — gradually, unevenly, in specific domains, at specific paces.

The fears are not irrational. They point at something real. The fear of displacement, the crisis of purpose, the absence of a shared vision for where we are going, the deep discomfort of discovering that the thing we thought made us unique may not be unique at all — these deserve more than a paragraph. They deserve their own examination. The concept of AGI is not helping people think about any of this. It is compressing genuine existential questions into a single imaginary threshold, and in doing so, it is obscuring the thing that actually needs our attention: not a moment in the future when machines wake up, but a process already underway that is quietly rearranging what it means to be human.

Stay tuned as this will be the subject of the next essay.

Sebastian Chedal writes about the intersection of mathematics, information theory, AI, and the philosophy of technology.